Do Cats Dream of LED Arrays?

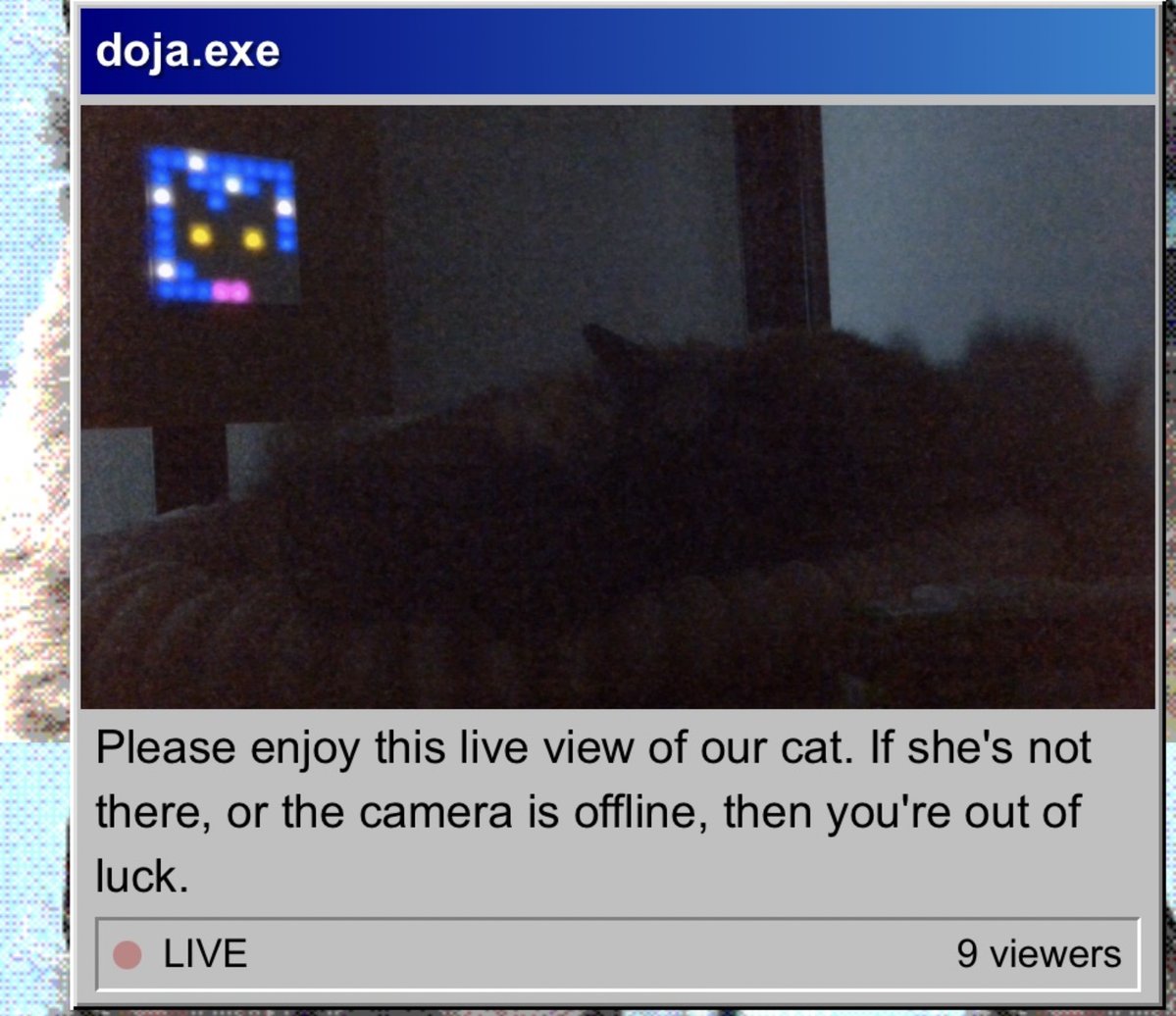

If you follow me on Mastodon or read this blog, you probably know we have a cat called Doja. When I'm working, Doja often sleeps on a rack behind me. It initially started with her sleeping on a pile of boxes. I upgraded her boxes to a proper bed, and eventually cleared that part of the rack of boxes altogether. One day I decided it would be cool to have a stream of her sleeping and performing other activities, and so the Doja Cam™ was born.

If you don't care about the details, you can view the camera here and draw her a picture here

Cat Detection

My first challenge was getting people to watch her. People don't want to watch an empty cat bed, but they do want to watch cats. Especially on the internet. I run my own Mastodon server, so why not give my cat her own account? And with that, I could broadcast whenever she's sleeping. How to detect a cat? At first I wanted to do some simple frame diffing. The idea is simple: take the average value of the pixels, and if the average value changes a lot, make a bold assumption that there is now a subject in the frame. Of course this would also trigger if I'd e.g. turn on my lights. Or if I'd move the cat bed. Or really anything that could change pixels.

This is unacceptable, because again no one wants to see an empty cat bed, so I did some shopping around and found that YOLO has built-in cat detection. Of course it does. From there on it's simple.

# Get a frame

cap = cv2.VideoCapture(settings.DOJA_STREAM)

ret, frame = cap.read()

# Get an instance of YOLO.

model = _get_model()

# Segment everything in the frame

results = model(frame, verbose=False)

# Loop through all results.

for result in results:

# Loop through the segments

for cls in result.boxes.cls:

# Check if segment classification is 15, which is cat

if int(cls) == 15:

return frame

If a frame is returned, we know the cat is in the frame. We're storing this information because I want to draw some information on when Doja is present or not. Now that we can detect Doja, we can broadcast to Mastodon that Doja is probably taking a nap on video.

And we're done!

How Many People Are Watching This?

My next question was, how many people are actually watching this? This was kinda trivial but also not.

First, the backend generates a unique uuid, which gets sent to the frontend when rendering the HTML.

This uuid is then used when retrieving the stream, setting the X_VIEWER_KEY header.

Everything is Django-based, but having Django serve static files or streams is silly, however we still need to be able to process this as interactive request.

For this, we can use auth_request, which will have nginx send a request internally to our server passing on all headers.

location /doja-cam/ {

auth_request /auth/hls/;

}

We then update a sorted list in Redis with the time, and remove any viewers who are outside the TTL.

redis = get_redis_connection("default")

redis.zadd("viewers:doja", {viewer_key: time.time()})

redis.zremrangebyscore("viewers:doja", "-inf", time.time() - VIEWER_TTL)

Then to get the viewer count, we simply count the members and return that:

redis.zcard("viewers:doja")

Every time someone watches the stream to retrieve a new segment, it'll go through this endpoint. Of course, it's easy to fuck up the stats, send fake requests, generate tons of UUIDs, but no one will really give a shit, including me.

Draw Me Like One of Your Pixelated French Girls

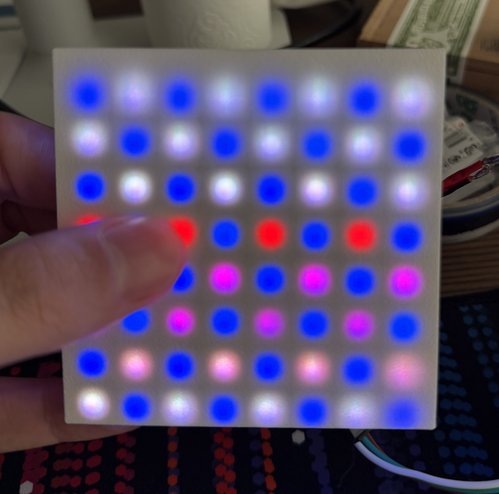

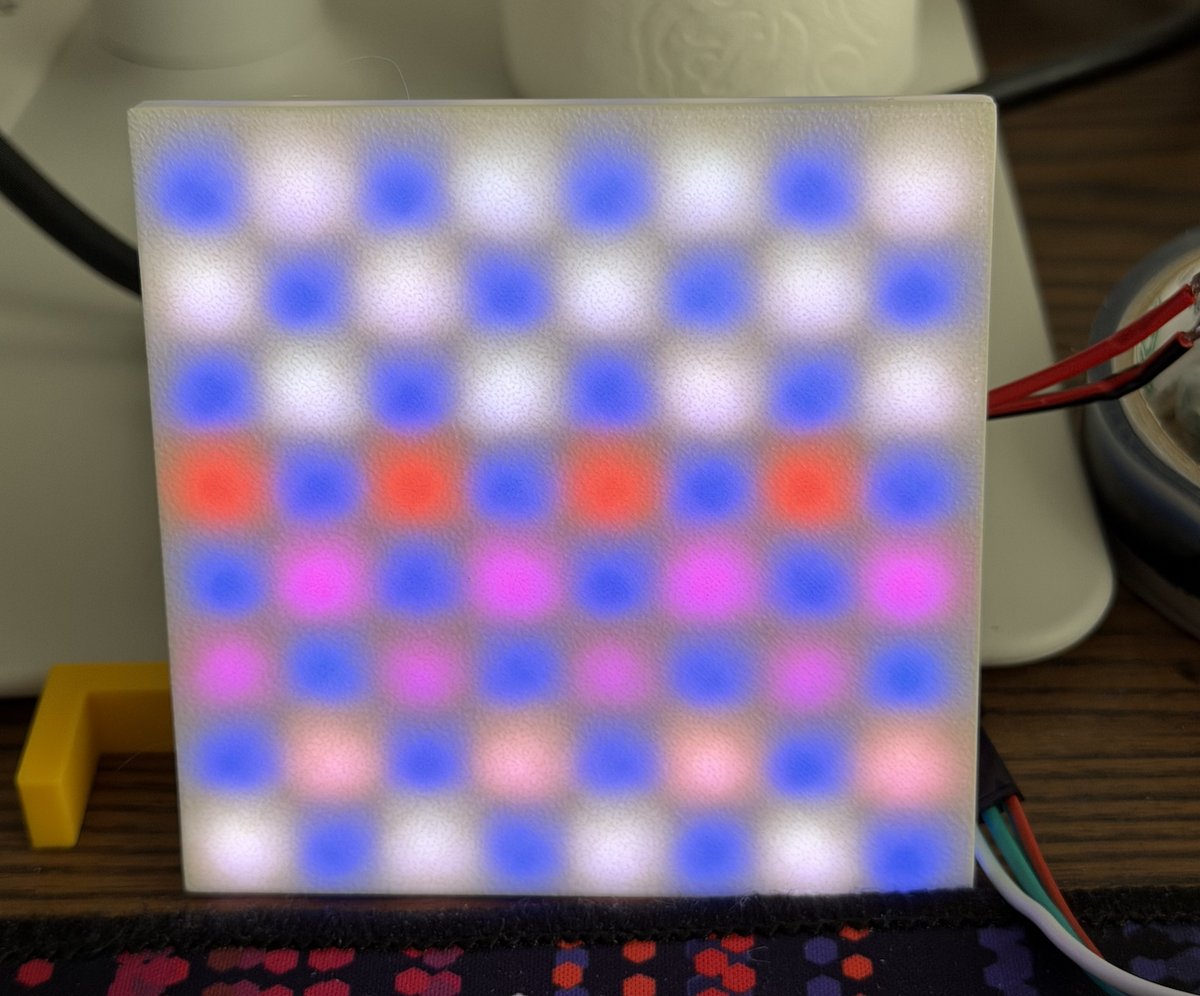

OK, finally we get to the picture frame. A while back I bought some ws2812b 8x8 LED arrays because they seemed fun to play around with. At night, it's kinda hard to see Doja in the frame, and this gave me an idea: a studio spotlight that I could control. The idea was simple. LED array => ESP32 => Control Panel.

Having a bright spotlight above your cat so people can see her probably borders on animal abuse, so I decided against it, but I couldn't shake the idea. Then it hit me: 8x8. It would be cool if we could display pixel art. And then it hit me even more: people love drawing. What if I would have some sort of interface that allows people to make drawings for Doja?

The Backend

And so Doja Paint was born. It works quite simply. Via this website you can access the Paint.exe "app".

We store each drawing as an array of 192 bytes, where each byte is either a red, green, or blue channel for a pixel on the grid with a value between 0 and 255.

If the first pixel is black, the color is stored as 0, 0, 0, [...], if the neighbouring pixel is red, it would be 0,0,0,255,0,0[...] et cetera.

Then we have an API endpoint that gets the newest drawing that has been shown. If it has been shown, we check to see how long it has been shown.

If it's been displayed for more than 60 seconds, we get the oldest drawing that has not been shown yet. All this API does is return the ID of that drawing.

And finally we have an API that gets the bytes for the drawing we wish to show.

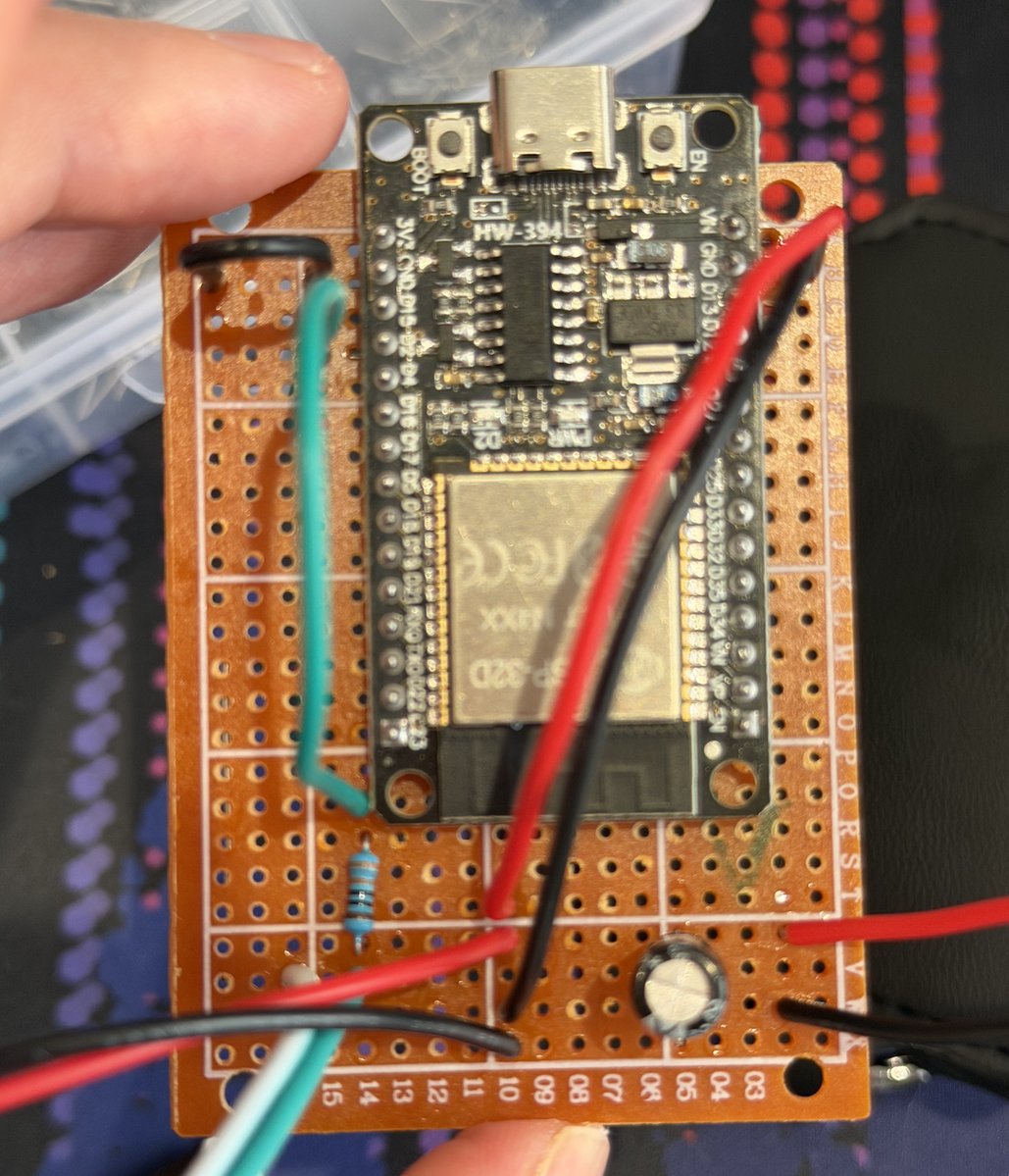

The Hardware

If you're familiar with IoT/embedded programming, you know about the ESP32. It's like Arduino on crack, with built-in Bluetooth and WiFi, and so it was an excellent platform for my idea. At boot, the ESP32 connects to my local network, does a quick check to see if the LED array works, and then calls the API that gets the ID of the oldest drawing. If the ID does not match a stored ID, which is 0 by default, we know the drawing "changed", and we call the API to retrieve the bytes. We simply read one byte at a time and use this information to update each pixel individually. This all happens within microseconds. Then, 2 seconds later we again query the drawing API and see if the ID changed from whatever we stored. Until the ID changes, after which we fetch the new drawing. Repeat ad infinitum.

The ESP32 board soldered to some perfboard

The ESP32 board soldered to some perfboard

The whole thing is powered by a USB-A to USB-C brick. I initially wanted to use USB-C to USB-C, but I bought a cheap receptacle from AliExpress which did not have the proper connections for PD negotiation. Luckily USB-A bricks usually output a voltage without requiring negotiating anything.

Brightness and Cheap Cameras

One issue I soon faced when testing is that accurately representing colors is hard. To improve the display, I designed a new diffuser for the LED array that featured a built-in grid to prevent colors from leaking.

With this new diffuser I could amp up the brightness, without colors leaking into the neighbouring pixels. However, at this point my camera became the issue where the brightness of the LEDs would overpower that of the natural light, with my camera turning down the exposure. I eventually found a good brightness setting that works well in day and night. Of course, this is also controlled via an API should I ever upgrade my camera or if the lighting conditions change.

Badaboom Badabing

There you have it! A way for people to send drawings to my cat and display them. Of course within a few hours the first poorly drawn dicks made it onto the display. But so far the drawings have been absolutely lovely.

I particularly enjoyed Doja sleeping under her own portrait.

I particularly enjoyed Doja sleeping under her own portrait.

And I enjoyed this mug too. It reminds me of Kraftwerk's Autobahn record.

And I enjoyed this mug too. It reminds me of Kraftwerk's Autobahn record.

Postscripts

Shortly after posting this, someone made a beautiful jizz cock. Well done!